Note: This article has been updated and expanded for 2020. Facebook split testing has never been more important for advertisers to control their budgets and make creative perform at its best.

If you have never advertised on Facebook before, you may feel a bit scared launching your first campaign when so many unknown variables could impact its performance.

This is why Facebook recently introduced a tool to give both new and experienced advertisers a more controlled way to launch their campaigns with a bit less worry and more insight potential.

The tool is called “Split Tests”. In marketing terms, it is a tool to launch A/B testing under the Facebook umbrella, whereas only one distinct element is being tested across multiple ad sets.

And because any Facebook campaign performance rests on a variety of variables (audience, bidding, placement, and creatives), being able to test these elements separately gives marketers a better understanding of which elements yield the best performance, and thus the potential for growth.

In this updated article on Facebook Split Testing we will provide the top 7 split tests every new advertiser on Facebook should start with:

_

Why try Facebook Split Testing:

- Facebook Split Testing will randomize your audience for a test by creating non-overlapping segments, so the variable being tested will not be impacted by the audiences.

- You will receive statistically significant data about the variable you are testing because Facebook will give each ad set an equal chance in the auction and will focus only one distinct variable that is being tested.

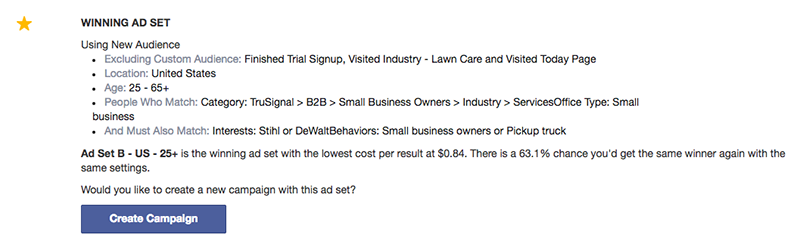

- After the Facebook Split Testing is complete, you’ll get a notification and email containing results. Facebook will even provide a percentage of how likely you are to get the same results for the winning ad set should you run this campaign again with the winning set. These insights can then fuel your ad strategy and help you design your next campaign with more confidence.

Steps to setup split tests

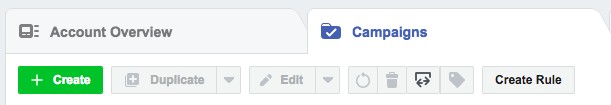

- From the Ads Manager Campaign view tab, click a green “+ Create” button to start a guided creation tool

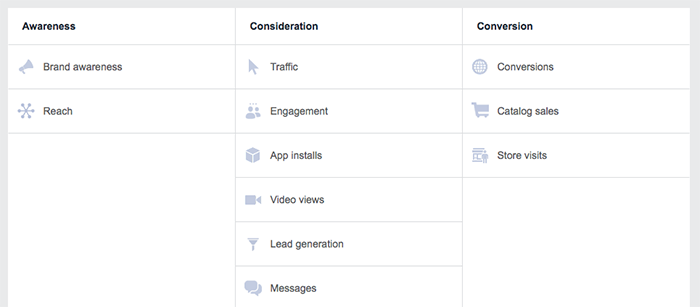

- Select your campaign’s objective

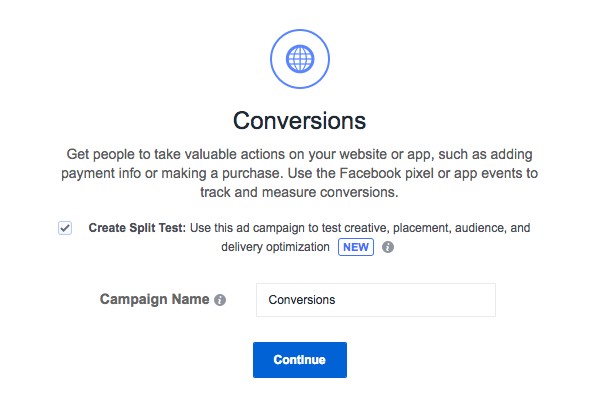

- Check the box “Split Test” and name your campaign. We suggest using a standard naming convention such as [Account Name] | Split Test | [Variable tested] | [Campaign Objective]

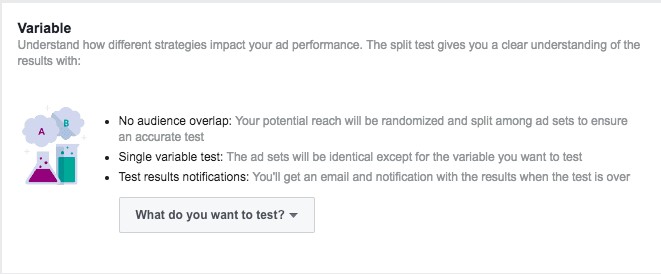

- On the next page, choose your testing variable from the “What do you want to test?” drop-down menu. There are four to choose from: Audience, Creative, Placement, and Bidding

- Proceed with the campaign build as usual. Depending on the testing variable, you will be presented with a number of input fields to define a variation of the tested variable. For example: if you are testing the audience, you will have to fill at least 2 separate audience targets with their own demo/geo/interests/custom audience fields. All other variables for the test will remain the same including creative.

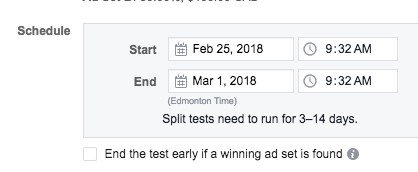

- When choosing the schedule for your Facebook Split Testing, make sure you set the start time at least 15 minutes away from when you are creating the split test. We recommend setting the start time a minimum of 30 minutes away just to give you enough time to finish and publish your campaign build.

Types of Facebook Split Testing we recommend you start with:

Variable tested: Audience. Test on Funnel: Top/ Awareness

When you have very little insight into what is your target audience, you may start Facebook Split Testing with a simple split test that will help you determine which audience profiles or lifestyle are most relevant for your brand.

We suggest comparing at least 3 types of audiences built via wide demographics (your geo, age targets, and gender) only, layering some interests or behaviors or relationship status or job functions that you may know from your research, and testing a lookalike list based on your email leads or past customers.

Depending on the optimization goal (linked to your funnel objective), you will gain valuable insights about your target audience for future campaigns.

- Ad Set A- Wide Demo (Age and Gender)

- Ad Set B- Wide Demo +Interests or Behaviors

- Ad Set C- Wide Demo + Lookalike Audience

Why test more heavily on your audience more so than ever now, why does it matter?

CPM’S have declined as a result of more people spending time at home and using their gadgets to consume, be it media, news or entertainment and shifting their behaviour to online. Typically, CPM’S are the best on broad targeting vs. custom audience (CRM lists or engagement audiences) vs. lookalike audiences.

We would recommend testing various Ad Sets based on either Demographic/Geo vs. Interests vs. Behavioural targeting and then amplifying the winning audiences in CBO style campaigns.

Variable tested: strength of Lookalike similarities based on a single source audience. Test on Funnel: Education (consideration) or Conversion

- Ad Set A- Lookalike with 1% similarity

- Ad Set B- Lookalike with 3% similarity

- Ad Set C- Lookalike with 5% similarity

Variable tested: Animations vs. Static Ads. Test on Funnel: Education (consideration)

Users on Facebook respond differently to static, short form and long form animations. Certain creatives do well for prospecting, others may perform better for remarketing. Facebook Split Testing these will help you determine which creatives maximize your audience response in the education stage of the funnel (also known as Consideration).

- Ad Set A- Carousel Ads

- Ad Set B- Explainer Video- Short Form (6-15 secs)

- Ad Set C- Explainer Video- Long Form (30+ secs)

Hack the learning stage via smaller split tests – why does this matter now?

Facebook’s algorithm is based on the Learning stage which is defined as the period when the delivery system still has a lot to learn about an ad set. During the learning phase, the delivery system is exploring the best way to deliver your ad set – so performance is less stable and cost-per-action (CPA) is usually worse.

It is recommended that a minimum of 50 conversions are generated per ad set within 7 days in order to exit the learning stage.

Achieving this recommendation may be challenging given that optimization events you’re focusing on may be costly. What to do about it? There is a way to hack the learning phase by opting into smaller, shorter Split Tests which may provide some insights about the best performing elements with some level of confidence.

Instead of setting up a regular ad campaign, build a Split Test campaign aiming to test 1 key variable that will then be supported with a bigger budget (Audience, Creatives, Placements, Bidding). We recommend focusing on Audiences and/or Bidding for Split Tests instead of Creatives or Placements. All Placement setting is a recommended best practice, and Creatives can be tested using Dynamic Creative Optimization once you figure out the best audience to scale.

Variable tested: Short Form Animation vs. Long Form Animation. Test on Funnel: Awareness or Education (consideration)

Facebook Split Testing can help you know how well your animations perform for various sales funnel objectives. In the top funnel, you may expect the short form animation to deliver better results since the audience is unfamiliar with your brand and long-form messaging will likely be skipped.

But if you are testing your short vs. long form animations on a consideration funnel stage where your audience is already familiar with your brand (you might have included Web visitors in the targeting), then your long-form animation hypothetically shall do better because a more in-depth explanation to a warmer audience is likely to engage with this form of content.

Facebook Split Testing these two creatives for various audience types to determine which one works better for which objective and funnel stage.

- Ad Set A- Short Form Animation (up to 6 secs)

- Ad Set B- Long Form Animation (10-30 secs)

Variable tested: Placement. Test on Funnel: All

Choose the following settings in your placement variable. Your audience and creative settings will be the same, so pick the ones that are most relevant for your optimization goal (Add to Cart, Purchase, Content View, etc.)

- Ad Set A- Desktop Only

- Ad Set B- Mobile Only

Variable tested: WFH/ COVID-19 related Ad Copy vs. Your General Always on ad copy

Everyone has gone through the major shift of adapting to a new reality where most of daily activities are carried from home

There is a significant amount of uncertainty and new behaviors are kicking in for consumers

Your regular (before COVID-19 pandemic) ad copy may not sound as relevant and thus may impact your ads CTR, but Facebook Split Testing it against new creatives is essential in determining what to keep and what to pause.

You can approach it with: 1) A/B tests using Split Test campaigns (select Creative variable testing), 2) Adding new ad copy to your DCO ads, or 3) Create standalone ads with revised ad copy and pair it with the best performing creatives.

Our clients have noticed a significant change in performance after the creatives were audited and new “Quarantine” copy was introduced

Variable tested: Test Target Cost bidding to have more control over your budget

For businesses operating under limited budgets and unlimited uncertainty, it’s important to make every marketing dollar go the distance with Facebook Split Testing.

One of the ways to do so on Facebook is to test Target Cost bidding where you’d test a specific Target Cost per Conversion event you’re trying to optimize your ads for (Lead events, Sales, Form fill outs)

One of the best ways to do it is to test at least 3 levels of bids per event with roughly a 20% cost range between each test variable (example: if you are optimizing for Add to Cart events and want to land at $15 per Add to Cart on average, then you could test 3 Target Costs using a Split Test campaign setup – $15 vs. $12 vs. $17 target cost levels)

How long should all tests run?

Facebook Split Testing allows split tests to run between 3-14 days. We recommend running every test for at least 5-7 days depending on the variable being tested and the budget you have.

It is important to understand that for certain optimization goals, it may take longer for the platform to determine a winning set because more impressions (and thus budget) will be needed to produce statistically significant results.

Recommendation for performance-based clients

If your primary goal is a deep-funnel conversion such as a long lead form submit event or a product sale, we recommend you focus your early Facebook Split Testing on more shallow conversion events.

For example, for your long-form lead acquisition objective, you may launch your first split test that focuses on Landing Page views instead of leads. By choosing this as your campaign optimization event, you will be able to get more data and learnings from your split test that may be focusing on placements or the type of creative.

For e-commerce clients, which ultimately optimize for purchases, we suggest to start split tests with an Add to Cart or Checkout Initiation optimization goal first. Facebook will optimize for a more shallow conversion in a funnel which may be obtained at lower CPAs, so your variable testing will produce statistically significant and relevant, actionable results for your future campaigns.

_________________________

Summary:

Once your split test is complete, Facebook will email you a summary of results indicating the winning ad set details. In addition, Facebook will include a probability of your campaign receiving the same results if you duplicate it and run again.

In other words, should you create a new campaign with a winning ad set variables, you will have a high chance of getting the same results. Success is never guaranteed, but this is a good starting point!

Here is what you’ll get in your email:

At Abacus, we were able to take the winning ad sets from Facebook Split Testing and scale them by putting more budget behind. It did not happen for all of the split tests as some just did not produce desired CPAs in the first place, but this was very specific to performance-based campaigns where a determining factor was a cost per purchase.

For all other top and mid-funnel split tests, we discovered top performing variables that produced sustainable results in future campaigns.

Wishing you good luck split testing! Scratch that. Wishing you good hypothesis testing instead. Luck won’t help here.

Want more performance out of your Facebook ads? Reach out to Abacus.Agency